How to Scrape Data from a Website to Excel?

There are over 1.11 billion websites and over 50 billion web pages. These websites contain a wide variety of information in different formats: text, video, images, or tables. This information must be scrapped or extracted for many applications, from powering search engines to running large language models.

Web scraping has been around since the conception of the Internet itself. It is almost as old as the web and has many use cases that help run applications ranging from common daily use, such as the search engine, to cutting-edge modern applications like training LLMs that power AI.

In this blog, we will discuss what is web scraping and how to scrape data from a website to Excel.

What is web scraping?

Web scraping is the process of retrieving or extracting unstructured data from websites and storing it in a structured format. This structured data can then be used to run analysis, research, or even train AI models.

If you ever want to scrape data from a website to Excel, copy-pasting the webpage content is the easiest option. But it’s not always the best way, as the data would not be formatted properly. The time spent in making the data usable can be considerable. Unlike the tedious process of manually copying and pasting data from each website and then structuring the data, web scraping tools convert unstructured website data into a structured Excel format within seconds, saving you time and effort.

Looking to scrape data from websites? Try Nanonets™ Website Scraping Tool for free and quickly scrape data from any website.

Use cases for web scraping

Web scraping has many use cases across teams and industries. Some common use cases are -

- Competitor research - Businesses scrape competitor websites to compare product offerings and monitor prices. Web scraping for market research is a good way for organizations to get to know the pulse of the market.

- Lead generation - Generating high-quality leads is extremely important to growing a business. Web scraping for lead generation is a good way to gather potential lead contact information – such as email addresses and phone numbers.

- Search Engine Optimization - Scraping webpages to monitor keyword rankings and analyze competitors' SEO strategies.

- Sentiment analysis - Most online businesses scrape review sites and social media platforms to understand what customers are talking about and how they feel about their products and services.

- Legal and compliance. Companies scrape websites to ensure their content is not being used without permission or to monitor for counterfeit products.

- Real estate markets - Monitoring property listings and prices is crucial for real estate businesses to stay competitive.

- Integrations - Most applications use data that needs to be extracted from a website. Developers scrape websites to integrate this data into such applications, for example, scraping website data to train LLM models for AI development.

Is web scraping legal?

While web scraping itself isn't illegal, especially for publicly available data on a website, it's important to tread carefully to avoid legal and ethical issues.

The key is respecting the website's rules. Their terms of service (TOS) and robots.txt file might restrict scraping altogether or outline acceptable practices, like how often you can request data to avoid overwhelming their servers. Additionally, certain types of data are off-limits, such as copyrighted content or personal information without someone's consent. Data scraping regulations like GDPR (Europe) and CCPA (California) add another layer of complexity.

Finally, web scraping for malicious purposes like stealing login credentials or disrupting a website is a clear no-go. By following these guidelines, you can ensure your web scraping activities are both legal and ethical.

How to scrape data from a website to Excel?

This blog will explore five ways to answer the question, 'How to scrape data from a website to Excel?' Whether you're a business owner, analyst, or data enthusiast, this blog will provide the tools and information on how to scrape data from a website and turn it into valuable insights.

We will deep dive into how to scrape data from a website to Excel.

- Manually copy and paste data from a website to Excel

- Using an automated web scraping tool

- Using Excel VBA

- Using Excel Power Queries

- Web scraping with Python

#1. Manually copy and paste data from a website to Excel

This is the most commonly used method to scrape data from a website to Excel. While this method is the simplest, it is also the most time-consuming and error-prone. The scraped data is often unstructured and difficult to process.

This method is best for a one-time use case. However, it is not feasible when web scraping is to be done for multiple websites or at regular intervals.

#2. Using an automated web scraping tools

If you want to scrap data from a website to Excel automatically and instantly, try a no-code tool like Nanonets website scraper. This free web scraping tool can instantly scrape website data and convert it into an Excel format. Nanonets can also automate web scraping processes to remove any manual effort.

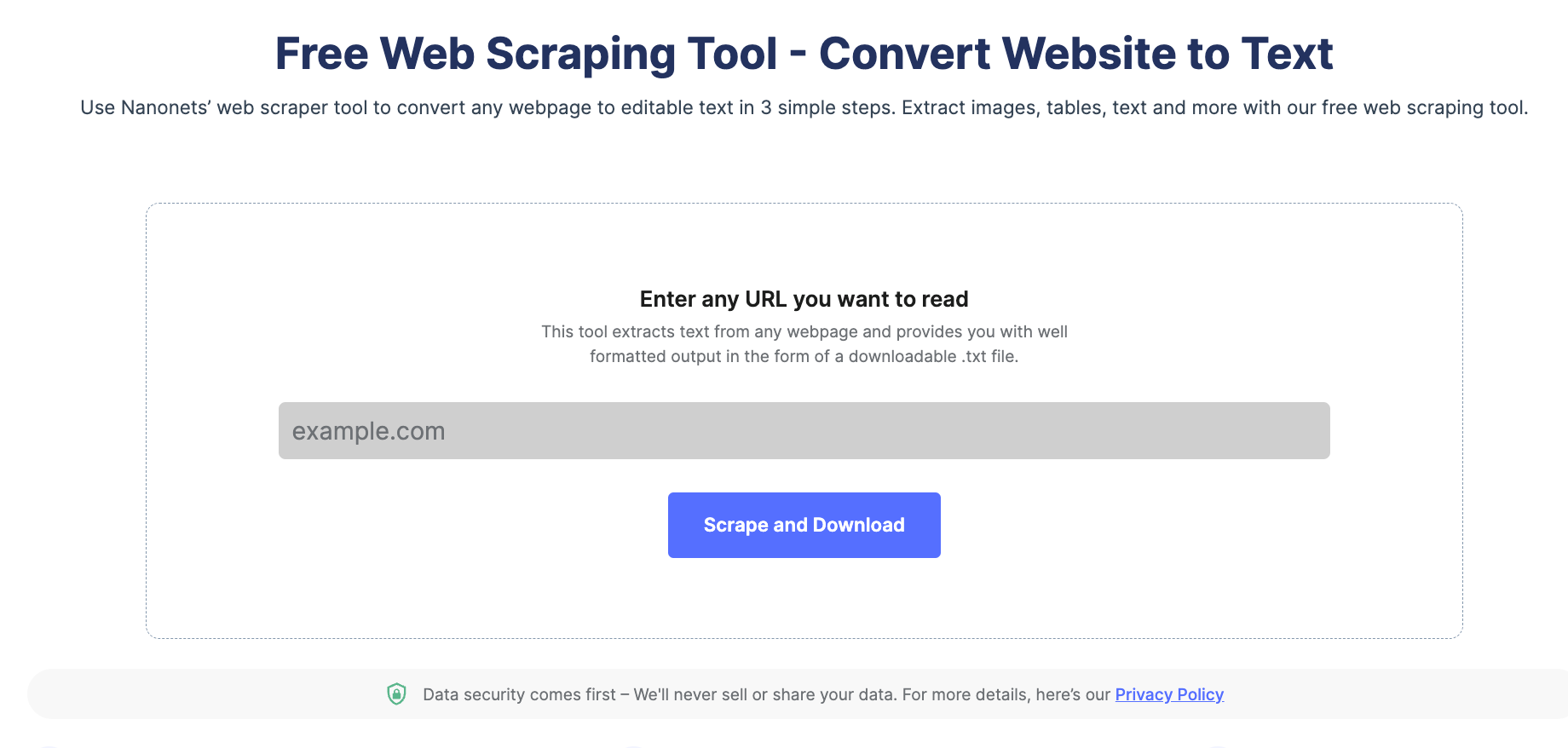

Here are three steps to scrape website data to Excel automatically using Nanonets:

Step 1: Head to Nanonets' website scraping tool and insert your URL.

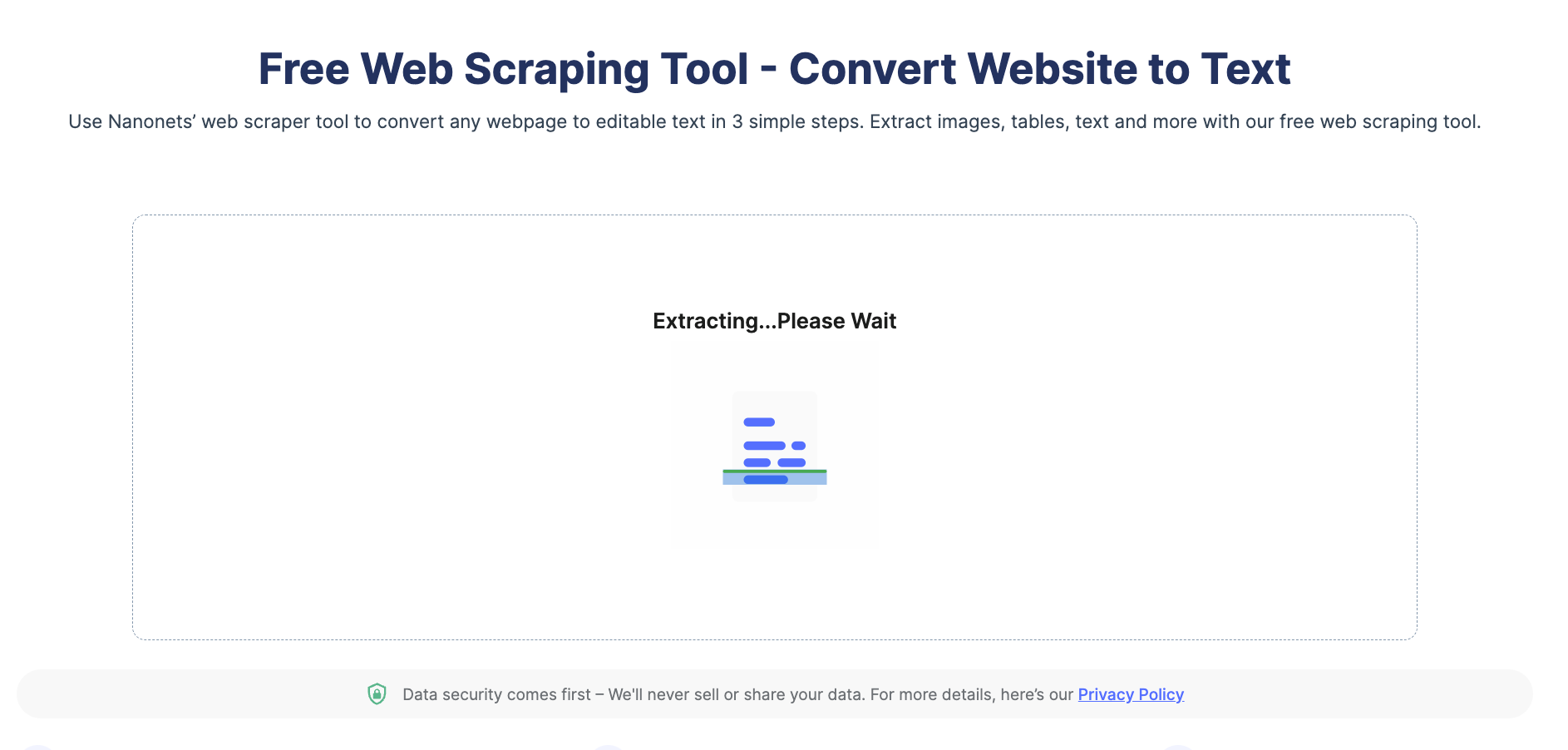

Step 2: Click on 'Scrape and Download'.

Step 3: Once done, the tool downloads the Excel file with the scraped website data automatically.

You can also automate the entire web scraping process by setting up the workflow on Nanonets. Here's a quick demo of how to achieve this -

Automate web scarping with Nanonets Workflow

Scrape data from Websites to Excel with Nanonets™ Website Scraping Tool for free.

#3. Using Excel VBA

Excel VBA is powerful and can easily automate complex tasks, such as website scraping to Excel. Let’s see how to use it to scrape a website to Excel.

Step 1: Open Excel and create a new workbook.

Step 2: Open the Visual Basic Editor (VBE) by pressing Alt + F11.

Step 3: In the VBE, go to Insert -> Module to create a new module.

Step 4: Copy and paste the following code into the module:

Sub ScrapeWebsite()

'Declare variables

Dim objHTTP As New WinHttp.WinHttpRequest

Dim htmlDoc As New HTMLDocument

Dim htmlElement As IHTMLElement

Dim i As Integer

Dim url As String

'Set the URL to be scraped

url = "https://www.example.com"

'Make a request to the URL

objHTTP.Open "GET", url, False

objHTTP.send

'Parse the HTML response

htmlDoc.body.innerHTML = objHTTP.responseText

'Loop through the HTML elements and extract data

For Each htmlElement In htmlDoc.getElementsByTagName("td")

'Do something with the data, e.g. print it to the Immediate window

Debug.Print htmlElement.innerText

Next htmlElement

End SubExcel Module for Website Scraping

Step 5: Modify the URL in the code to the website you want to scrape in the Excel workbook.

Step 6: Run the macro by pressing F5 or clicking the "Run" button in the VBE toolbar.

Step 7: Check the Immediate window (View -> Immediate Window) to see the scraped data.

The website data should have been scraped into the Excel workbook.

What should you consider while using VBA to scrape data from a webpage?

While Excel VBA is a potent tool for web scraping, there are several drawbacks to consider:

- Complexity: VBA can be complex for non-coders. This makes it difficult to troubleshoot issues.

- Limited features: VBA can extract limited data types. It can’t extract data from complex HTML structures.

- Speed: Excel VBA can be slow while scraping large websites.

- IP Blocking Risks: There is always a risk of IP getting blocked when scraping large data websites.

Looking to scrape data from websites? Try Nanonets™ Website Scraping Tool for free and quickly scrape data from any website.

#4. Using Excel Power Queries

Excel power queries can scrape website data easily. It imports web pages as text files into Excel. Let’s see how to use Excel Power Query to scrape web pages in Excel.

Step 1: Create a new Workbook.

Step 2: On the home screen, select New, and search for ‘Power Query’ in the search bar.

Step 3: Open the Power Query tutorial and press Create.

Step 4: Click on Data > Get & Transform > From Web.

Step 5: Paste the URL that you want to scrape into the text box and click OK.

Step 6: Under Display Options in the Navigator Pane, select the Results table. Power Query will preview it in the Table View pane on the right.

Step 7: Click on Load. Power query will transform and load the data as an Excel table.

Step 8: To refresh the data, right-click on the data in the worksheet and select "Refresh."

Scrape website data using Excel Power Query

What are the drawbacks of using Excel Power query to extract webpage data to Excel?

- Power queries can’t scrape data from dynamic webpages or webpages with complex HTML structures.

- Power queries can extract unformatted data. For example, data may be extracted as text instead of a number or date.

- Power queries rely on the webpage's HTML structure. If it changes, the query may fail or extract incorrect data.

#5. Scrape websites using Python

Web scraping with Python is popular owing to the abundance of third-party libraries that can scrape complex HTML structures, parse text, and interact with HTML form. Some popular Python web scraping libraries are listed below -

- Urllib3 is a powerful HTTP client library for Python. This makes it easy to perform HTTP requests programmatically. It handles HTTP headers, retries, redirects, and other low-level details, making it an excellent library for web scraping.

- BeautifulSoup allows you to parse HTML and XML documents. Using API, you can easily navigate through the HTML document tree and extract tags, meta titles, attributes, text, and other content. BeautifulSoup is also known for its robust error handling.

- MechanicalSoup automates the interaction between a web browser and a website efficiently. It provides a high-level API for web scraping that simulates human behavior. With MechanicalSoup, you can interact with HTML forms, click buttons, and interact with elements like a real user.

- Requests is a simple yet powerful Python library for making HTTP requests. It is designed to be easy to use and intuitive, with a clean and consistent API. With Requests, you can easily send GET and POST requests, and handle cookies, authentication, and other HTTP features. It is also widely used in web scraping due to its simplicity and ease of use.

- Selenium allows you to automate web browsers such as Chrome, Firefox, and Safari and simulate human interaction with websites. You can click buttons, fill out forms, scroll pages, and perform other actions. It is also used for testing web applications and automating repetitive tasks.

Pandas allow storing and manipulating data in various formats, including CSV, Excel, JSON, and SQL databases. Using Pandas, you can easily clean, transform, and analyze data extracted from websites.

While discussing data extraction techniques, it's crucial to streamline the entire data journey, from scraping to analysis. This is where Nanonets' Workflow Automation comes into play, revolutionizing how teams operate. Imagine seamlessly integrating scraped data into complex workflows within minutes, using AI to enhance tasks, and even involving human validation for precision. With Nanonets, you can connect the dots from data gathering to actionable insights, making your processes more efficient and your decisions smarter. Learn more about transforming your operations at Nanonets' Workflow Automation.

Automating webpage data extraction

Excel tools like VBA and web query can extract webpage data, but they often fail for complex webpage structures or might not be the best choice if you have to extract multiple pages daily. Pasting the URL, checking the extracted data, cleaning it, and storing it requires a lot of manual effort, particularly when this web scraping task must be repeated manually.

Platforms like Nanonets can help you automate the entire process in a few clicks. You can upload the list of URLs into the platform. Nanonets will save tons of your time by automatically:

- Extracting data from the webpage - Nanonets can extract data from any webpage or headless webpages with complex HTML structures.

- Structuring the data - Nanonets can identify HTML structures and format the data to retain table structures, fonts, etc., so you don’t have to.

- Performing Data cleaning - Nanonets can replace missing data points, format dates, replace currency symbols, or more in seconds using automated workflows.

- Exporting the data to a database of your choice - You can export the extracted data to Google Sheets, Excel, Sharepoint, CRM, or any other database you choose.

If you have any requirements, you can contact our team, who will help you set up automated workflows to automate every part of the web scraping process.

Eliminate bottlenecks caused by manually scraping data from websites. Find out how Nanonets can help you scrape data from websites automatically.